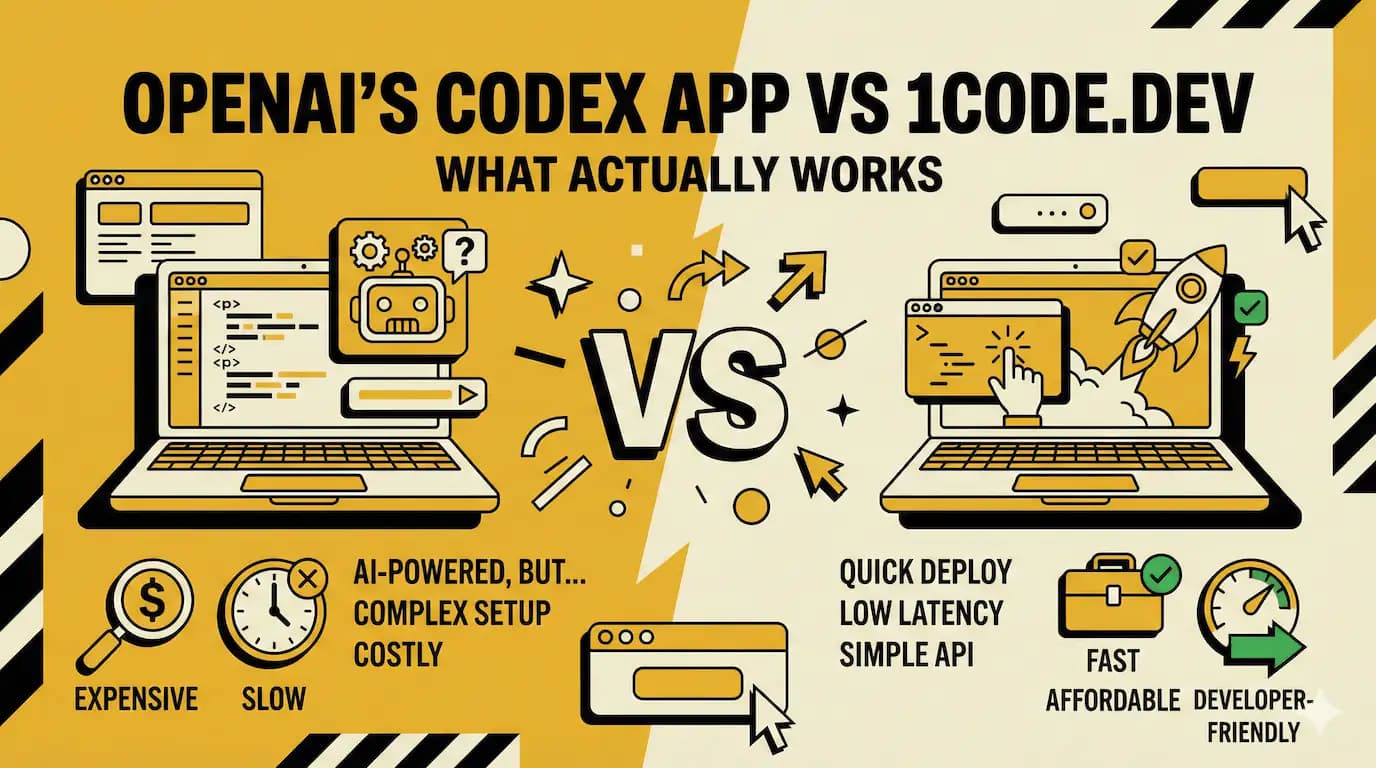

OpenAI's Codex App vs 1code.dev: What Actually Works

Sam Altman built an app with his own AI. Then he tweeted that it made him feel "a little useless."

That was February 1st. OpenAI just launched the Codex desktop app four days ago. The CEO of the company that made it admitted the tool's suggestions were better than his own ideas.

And that's where things get interesting.

Because while everyone's talking about Codex, there's this open-source project called 1code.dev that's been quietly doing something similar since January. Same idea. Different approach. Built by developers who got tired of waiting.

Here's what actually happened and why it matters.

Two tools, same problem

OpenAI released Codex as a "command center" for AI coding agents. You can run multiple agents at once. Each one works on different tasks. The app helps you manage them without losing your mind.

1code.dev does almost the exact same thing. But it's open-source. And it launched first.

Both tools solve a real problem. Running one AI agent is manageable. Running five at the same time while trying to review their changes, debug their mistakes, and keep them from breaking each other? That's chaos.

I tried managing multiple Claude Code sessions last month. Had three terminal windows open. Two browser tabs. A notes app to track what each agent was doing. My desktop looked like a surveillance room. It was stupid.

You shouldn't need a spreadsheet to use AI coding tools.

That's what both of these tools figured out. But they went different directions with it.

What Codex actually is

OpenAI's version is polished. Native macOS app. Integrated terminal. Built-in worktree support so you can run agents in parallel without them stepping on each other.

The reactions on launch day were all over the place. Some developers loved it. Others said it felt rushed.

One Reddit user tested it on real projects and said it works but has rough edges. Another said they switched from Claude Code and haven't looked back.

But here's the thing. Codex is playing catch-up. Anthropic's Claude Code hit $1 billion in revenue within six months. OpenAI is trailing in the AI coding space. This app is their attempt to gain ground.

The app itself does what it promises. You add projects. Start threads. Run multiple agents. Review diffs without leaving the window. It's functional.

But it's also slow sometimes. And some users said it misunderstands commands. One person asked it to merge files and it "merged all repositories into one text document."

That's not a bug. That's a fever dream.

The workflow problem

Most tutorials tell you AI coding tools make you faster. They don't mention the new problems they create.

Like this. You start an agent on a feature. It runs for twenty minutes. Makes sixty file changes. Some are good. Some break tests you forgot existed. Now you're in review hell.

Or you run two agents at once. They both edit the same file. Git merge conflicts everywhere. You spend an hour untangling what should have taken ten minutes.

The promise was "vibe coding." The reality is "Git conflict archaeology."

Codex tries to fix this with worktrees. Each agent gets its own isolated workspace. You review changes one at a time. Approve what works. Reject what doesn't.

It's better. But it's still a lot of babysitting.

1code.dev took a different angle.

What 1code actually does

Instead of building a proprietary app, they built a client for Claude Code. Open-source. You bring your own Claude API key. Run agents locally on Mac or in remote sandboxes on the web.

The remote sandbox thing is clever. You can check on agents from your phone. Live previews work on mobile too.

I like that they're not trying to own the whole stack. You can use it with Claude now. They're adding OpenAI Codex support soon. Whatever model wins, 1code adapts.

The UI looks like Cursor. Keyboard-first. Hotkeys for everything. Built by developers who wanted this for themselves, then open-sourced it.

Here's what's weird. They're planning features that sound better than what Codex shipped with. Bug bots that scan your changes. QA agents that check if new features break old ones. API access so you can trigger agents from Slack or GitHub.

And it's free. Well, you pay for Claude API calls. But the tool itself costs nothing.

The naming problem

Can we talk about how confusing this got?

OpenAI had a thing called Codex in 2021. It was the model behind GitHub Copilot. Then they deprecated it. Now they brought the name back for a completely different product.

Meanwhile there's a subreddit called r/codex. And Claude has Claude Code. And someone made a VS Code extension also called Codex.

I spent fifteen minutes yesterday trying to figure out if an old blog post was about the 2021 model or the 2026 app. Gave up. Closed the tab.

This is what happens when everyone names their AI coding tool after the word "code." We're going to run out of variations by 2027.

Why speed actually matters

You'd think speed doesn't matter much. The agent does the work. You wait. Who cares if it takes two minutes or five?

But i've used these things enough to know. Speed changes behavior.

When an agent responds fast, you iterate. Try something. See it fail. Adjust. Try again. You stay in the flow.

When it's slow, you context switch. Check Twitter. Answer Slack. By the time the agent finishes, you forgot what you asked it to do.

One Reddit thread complained that Codex takes three minutes per response. That's unusable. You can't maintain focus through three-minute waits.

Claude Code is faster for pair programming. Codex is supposedly better for long-running async tasks. Different tools for different workflows.

But honestly? I'd take fast and decent over slow and perfect any day.

The better-than-human thing

Sam Altman's tweet wasn't just humble bragging. It hit something real.

The AI suggested improvements he didn't think of. That's not scary because it replaced him. It's weird because it made him question his own ideas.

I felt this last week. Asked Claude to refactor a function. It came back with a solution that was cleaner than mine. Used a pattern i'd never considered. Worked perfectly.

For about five seconds i felt stupid. Then i realized that's the point.

The tool isn't supposed to make you feel smart. It's supposed to make your code better.

But that feeling lingers. Especially when you're debugging something for hours and the AI fixes it in thirty seconds.

Developers on Reddit are split. Some say Codex 5.2 is better than Claude at debugging complex logic. Others say Claude writes better code but Codex spots more issues.

My coworker tried to use only AI for a week. Made it three days. Said it felt like being a code reviewer for a junior developer who never learns.

The actual competition

Everyone's comparing Codex to Claude Code. But the real competition is Cursor.

Cursor has been doing this longer. Better UI. Faster. More integrated. One developer built the same app in both VS Code and Cursor. Cursor was 2.5x faster.

And Cursor isn't sitting still. They're shipping updates constantly.

1code is trying to be the open-source alternative. Works with any model. No vendor lock-in. Betting that flexibility matters more than polish.

Codex is betting on OpenAI's models getting better. And on the fact that most developers already have ChatGPT Plus subscriptions.

But right now? Anthropic is winning. Claude Code hit $1 billion annualized revenue in six months. That's not a lead. That's a gap.

Where this goes

Both tools will add more models. More integrations. More automation.

1code's roadmap includes bots that auto-fix Sentry errors. Imagine getting a bug report and having an agent open a PR before you even look at it. That's where this is headed.

Codex will get faster. Better at understanding context. Probably add team features for enterprises.

The real question is whether developers want one tool or many. Do you want Cursor for editing, Codex for agents, 1code for parallel work? Or one thing that does it all badly?

i don't know. But i know what i'm doing now. Using Claude Code through 1code for most work. Switching to Codex when i need heavy debugging. Cursor for quick edits.

It's messy. Three different tools. Three different workflows. But each one's best at something specific.

The honest part

Most people don't need this.

If you're writing straightforward CRUD apps or fixing obvious bugs, regular Copilot is fine. These multi-agent tools are overkill.

They're for people building complex systems. Or managing multiple features at once. Or dealing with codebases so large that context windows matter.

If you're solo and working on one thing at a time, save your money. A good linter and Stack Overflow will get you further.

And here's what nobody's saying. These tools create new problems. You spend less time coding and more time reviewing. Managing agents is work. Sometimes more work than just writing the code yourself.

The workflow changes. You become a coordinator. Not a builder.

That's fine for some people. Annoying for others.i still think about that Sam Altman tweet. Should have been a win. His company's tool worked so well it surprised him. But instead he felt useless.

Maybe that's the trade-off. Better tools. Weirder feelings about what we actually contribute.

My search app still returns the wrong results sometimes. But at least i know which part of the code is broken. For now.

Enjoyed this article? Check out more posts.

View All Posts